Information manipulation

Information Manipulation/Misrepresentation

When THR is reported in the media, manipulation techniques are sometimes employed. The press may, or may not be aware that this is happening, but often the attempt is quite transparent, and the research required to prove it, so trivial, it is hard to argue they are not complicit.

Here are ways to spot this, including the less obvious omissions and questionable use of language.

This page can also be used to point out ways to detect such manipulation and what to look for, including people and sites that may have advice on the subject.

Definition

For the purposes of this page misinformation is considered to be passing on information, that is not correct, and has been manipulated, to read or imply misleading, or false ideas. To be misinformation, the entity passing the information on must be unaware the information has been manipulated and also not have reason to verify it's being correct. A member of the public quoting a reputable newspaper, for example. A journalist / editor would be less able to claim this, since they can be reasonably expected to do at least 'due diligence', before printing something as fact.

Likewise disinformation is to deliberately manipulate the information, so as to change the context. A newspaper editor choosing a minor statement discussed in a scientific paper/press release, instead of the main conclusion, that increases the impact, or distorts the view. This is effective particularly, where the reader is unlikely to read the linked science, often few if any will take the time to check the source themselves.

Types of manipulation

General information

Our page specifically for nicotine misinformation listed in the see also section.

The effects of subtle misinformation in news headlines. Ullrich K H Ecker*, Stephan Lewandowsky, Ee Pin Chang, Rekha Pillai School of Psychological Science, Bristol Neuroscience, Cabot Institute for the Environment (*Corresponding author)

Everything at once (the shotgun approach?)

ACSH Article on cleveland press release (calling out blatant misinformation) the acsh writing on exactly the sort of misinformation detailed here, great examples of how obvious this must be to the writers, and how logic and reason are ignored.

- "The article is a simmering mixture of equivocation, cherry-picked statistics, and outright lies. There's an eight-letter word for it we won't use to preserve our family-friendly reputation. Let's look at some specifics and hopefully help Cleveland Clinic right its ship."

Creative use of language

(Miss) use of modal verbs

For example the word may is doing all the work here, when we read the article, and the study it reports on, we find that short term changes in heart rate and blood pressure where detected, however nothing harmful or long term. Such things as drinking a cup of coffee, running for a bus, or a surprise gift, all cause similar changes.

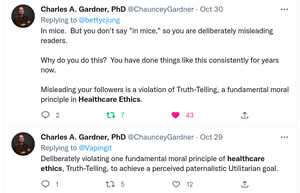

Others to look out for are 'might', probably', 'could' and all the usual suspects. It isn't uncommon to read X probably causes Y, but on reading the full text find out that it's not actually possible to tell, or even sometimes that in fact, it probably doesn't. Such tactics can be argued to go against principles of 'good ethics' particularly where (public) health is concerned, and where not likely to be obvious, context (as provided above with coffee etc.) should be provided. This is not unreasonable, the public expects high standards, when reading about things that are used to make health choices. Please see the next picture particularly the second tweet pictured, regarding the reasons for being untruthful, and why that isn't acceptable, particularly for trusted health organisations/professionals.

Omitting important information

Many media reports, and even press releases seem to use the tactic pictured in the image, failing to mention that the results are from a study in mice.

Importantly mice are not little people, at best, a mouse study can indicate areas of interest to look at in humans. Only if the mouse model accurately produces results, that can be reliably, and repeatedly used, to predict the outcome in humans, can it be used this way. Otherwise it can be used to guide research, and explore new ideas, but absolutely not used to predict harm in humans.

Clearly the public should not be confused in this way, particularly if no evidence has been found for these results to reflect what happens in humans. Omission of information that is required to make an informed decision, particularly if the public can't reasonably expected to deduce or know, is a violation of ethics.

Dr Gardner Has a PHD in developmental neurobiology, and has taught healthcare ethics.

There is information on this page Does nicotine damage the developing adolescent brain? Including the big issue; many millions worldwide started smoking in their teens, if such damage occurred in humans (this has been studied, scientists have looked), it would be trivial to find it, no such issues have been found, none.

Confusing use of numbers

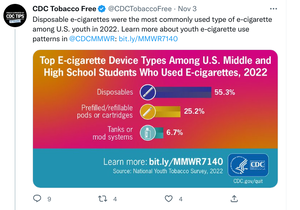

The definition of youth can vary, and the one used in an article or press release, may be wildly different than the readers expectation. This happens frequently, and is highly variable, youth might refer to ages 12-25, 10-18, 12-21 etc. even wider ranges have been seen at times, often the definition is absent, or buried in the text somewhere. Similarly definitions of children can be equally ambiguous, some ranges used are as wide as 'youth' above, and may include some adults, clearly this is not what most people expect. As above the range if given at all, can be hard to find.

Another common tactic is to present numbers in unusual, or unexpected ways. Examples include percentages of percentages. For example if 10% of American youth vape and 25% (one in 4) vape daily, it is very easy to get the impression 1 in 4 vape daily. If you read carefully, you can see the 25%, is in fact 25% of the 10%. This has lead to people saying 25% of american youth vape daily, quite often (not always) because they didn't understand the information.

Lumping several categories together to create another category, for example summing all the categories together and calling that, 'ever use' or something even more obscure.

Graphs that are confusing, or that lack a scale or other context

The screenshot shown includes a tweet that is misleading, in that there is no context, it can easily be taken to say 55.3% of youth use disposable devices, when it is again a percentage of a percentage, so 55.3% of 9.8%. It is no wonder that even those doing things right, can become confused.

Agencies like the CDC, when communicating with the general public, have a responsibility to ensure they make the information clear, and easy to understand. You can reasonably expect such a graph to contain the information required, to see what scale is used, and what it represents, without having to click a link to further information.

Presenting results of chemical analysis without comparison to exposure levels that may cause harm, or legal limits for workplace exposure.

Analytical techniques such as Gas Chromatography/Mass Spectroscopy can detect tiny quantities of compounds in a sample, Limits Of Detection are becoming lower as electronics and computing increase in power and efficiency. Equipment manufacturers compete with each other to detect tinier, and tinier levels. This is a good thing for the most part, as anything that is present no matter how tiny the amount will be detected. However this can be a problem too.

They focus more on the fact that nanoscopic quantities where discovered, and completely forget to compare the amounts with something sensible (like permitted workplace exposure limits) presumably because their data would be 'lost in the weeds' in comparison.

It is also not good practice to operate equipment at or near it's LOD (Limit Of Detection), if a percentage of samples that can be expected to contain some trace of e.g. Nicotine result in no detection, then it is safe to assume, the instrument is operating at the extreme of it's capability. Most of the time, manufactures and good practice suggest avoiding the extreme ends of the range, due to issues such as noise at the low end, and detector swamping at the high end. An example more commonly encountered; an audio amplifier will sound best when operated somewhere in the middle of it's power output range. (Too low, and noise can be apparent, too high, and distortion becomes a problem) Scientific equipment is not so very different, it uses electronics, and the laws of physics still apply.

Unusual or confusing definitions

Using unexpected definitions e.g. frequent use, being defined an used 1 time in a month, far from most people's expectation.

Use of ratios of users to non users, or between groups of users. This can be used to show an apparent increase in a group, this can make it appear that use in one group has increased, when in fact use has decreased in both groups, but not in equal measure. The proportion of users in one group has increased, even though both groups saw a decline, often hard to detect due to lack of information to make a fair comparison. This is possibly one of the most underhand tactics, since it can be used to confusingly, show a large decrease as an apparent increase, requiring sometimes a great deal of effort to get an accurate understanding.

Flat out lies

The recycling of 'Ecig Vaping Acute Lung Injury' is a prime example, we have a whole page for EVALI, it's been debunked so much by now, it is almost painful seeing it reappear. A condition caused by inhaling Vitamin E acetate (soluble in oil, such as black market THC carts use), this will not even mix with nicotine containing liquids (these use water soluble chemistry), without defying the laws of physics.

Popcorn lung, there has never been a case linked to vaping, or to smoking (smoking exposes users to approximately 750 times the amount of diacetyl vaping does), if smoking doesn't result in popcorn lung, vaping can't possibly do so.

Image manipulation

New York Times article By Elisabeth Bik

Dr. Bik is a microbiologist who has worked at Stanford University and for the Dutch National Institute for Health. She works to find and bring to attention such issues, please see the article for details.

She is also active on Twitter

Issues with 'Spin' of results, either by the writers of the paper, or press release (or both)

Careful analysis of many e-cigarette/HTPs studies show that conclusions are often misreported with nonsignificant findings being presented as significant or demonstrating an effect (spin bias).

CoEHAR researchers in their article:

- have developed a two-step technique and objectively identified that about 30% of studies exhibited spin bias of nonsignificant findings.

- The distortion of scientific data and the unbridled search for sensationalist headlines is dragging the academic research into an abyss of non-credibility.

- Renée O'Leary, Giusy Rita Maria La Rosa, Robin Vernooij, Riccardo Polosa PMID: 37131244 PMCID: PMC10155298 DOI: 10.1186/s13104-023-06321-2

See also

See our page relating to poor quality research: Substandard research but be aware that even good science can be misrepresented, though often the two issues go hand in hand.

Suggestions for additions to this page

Here you may add links or information from credible sources, examples of problems ‘in the wild’ screenshots etc. for our regular page editors to address, all information must be factual and based on evidence, anything without sufficient evidence will be deleted.

Instructions for editors of this page

Warning: Contentious subject, please would Page Authors take care to remain factual and include evidence/examples.

External links

https://scienceintegritydigest.com/about/ Science integrity direct focuses on image manipulation in scientific papers.